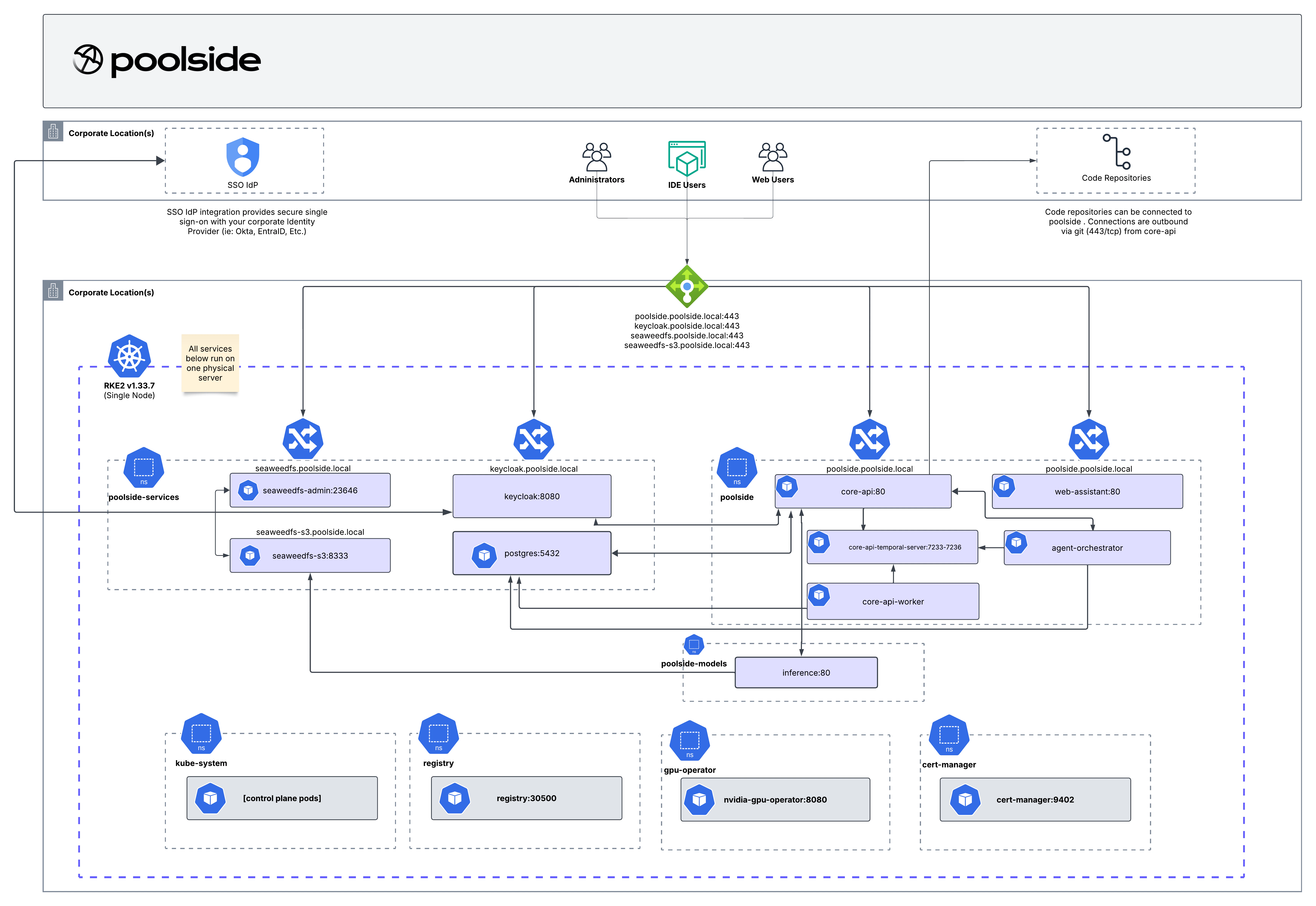

Architecture

- Install the platform on upstream Kubernetes

- Choose and configure model inference on upstream Kubernetes

Model options

After the platform is running, choose how you want to serve models:Poolside-managed provisioning

Provision inference servers through the Poolside platform.

Local GPU inference

Serve models from GPU-backed inference workloads in your cluster.