- Platform: The Poolside application services and their dependencies, including Kubernetes, database, object storage, container registry, ingress, TLS certificates, and identity provider configuration.

- Model deployment: The inference stack that serves Poolside models on local GPU-backed hardware.

Deployment paths

Amazon EKS

Deploy Poolside on Amazon EKS using Helm, with an optional reference architecture for platform components.

Red Hat OpenShift

Deploy Poolside with Helm on your OpenShift cluster.

Kubernetes

Deploy Poolside with Helm on your self-managed Kubernetes cluster such as RKE2 or Charmed Kubernetes.

If you already run the Terraform-based AWS deployment bundle, see Amazon EKS with Terraform (legacy) and the migration guide.

Deployment management

Poolside cloud deployments are self-managed, so you control the installation process and infrastructure. For cloud-specific platform components such as the Kubernetes control plane, we provide reference architectures and documentation so you can install and manage the platform independently. You still receive support for installation and configuration.- Amazon EKS reference architecture

Architecture

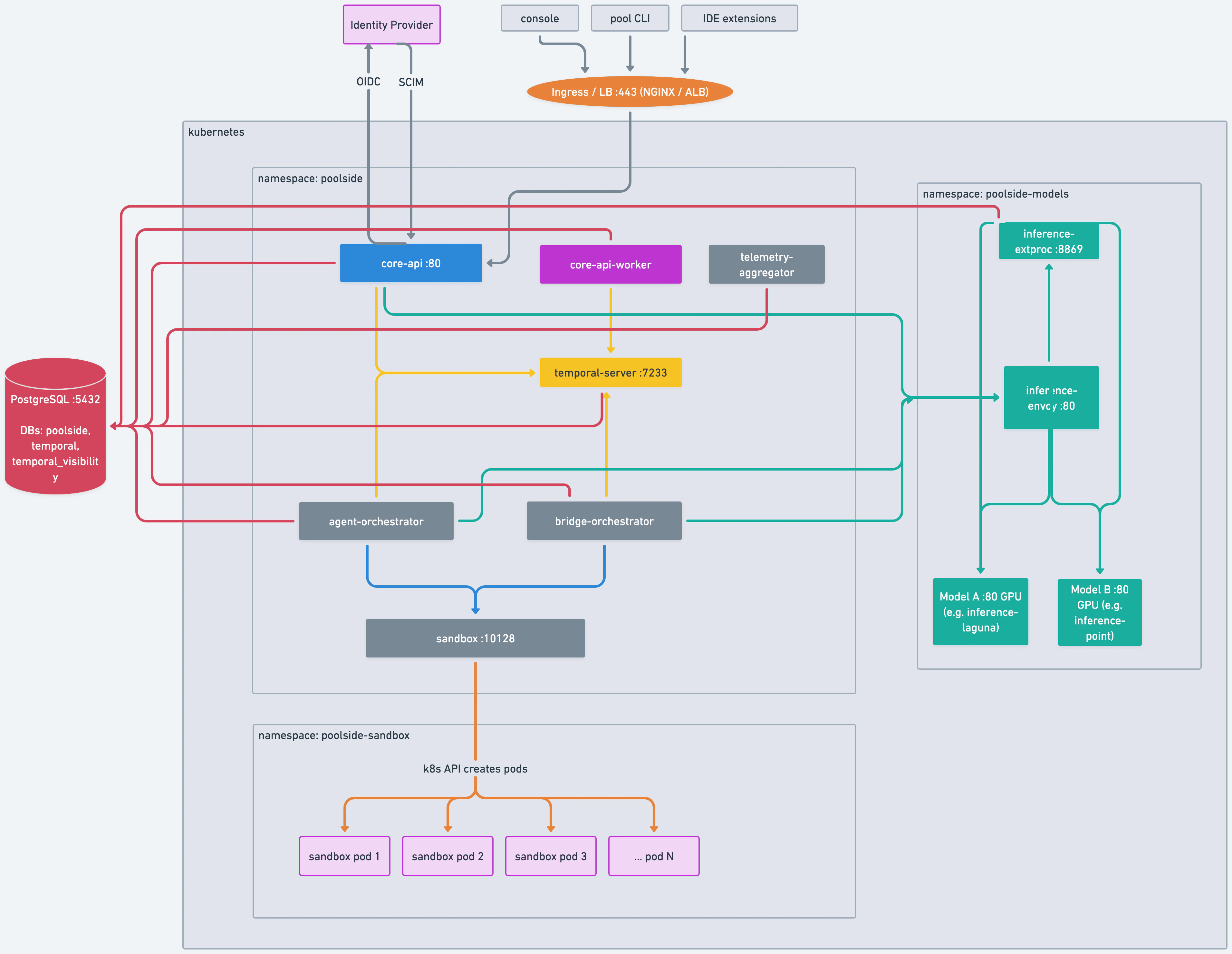

The following diagram shows the Poolside application architecture and how components interact within a Kubernetes cluster.

- Client access: The console, pool CLI, and IDE extensions connect to the platform through an ingress or load balancer.

- Application services: The API server, Temporal workflow engine, workers, telemetry aggregator, and agent orchestrator.

- Inference stack: The Envoy proxy, external process filter, and GPU-backed model inference such as Laguna and Point.

- External dependencies: An identity provider for OIDC and SCIM, a PostgreSQL database, and optional cloud-hosted models such as OpenAI-compatible endpoints.

Operational considerations

- Service availability: All external services your deployment depends on (including object storage, container registry, database, and identity provider) must be reachable from within the cluster. If you use local GPU inference, the cluster must have access to compatible GPU hardware. If you use a cloud-based inference service, ensure the service is available in the region where you deploy.

- Backup and recovery: You are responsible for backup and recovery for the infrastructure and external services in your selected deployment path, such as PostgreSQL, object storage, container registry contents, and Kubernetes configuration.