Overview

Poolside publishes an AWS reference architecture that pairs the Helm-based deployment with an opinionated AWS foundation. Use it to plan your deployment, to align on key decisions before installation, or to provision the AWS infrastructure directly with the reference Terraform. You provision the AWS infrastructure. Poolside provides the Helm chart and an optional Terraform reference stack that wraps the same chart. You can apply the Terraform as published, fork it, or reproduce the architecture by hand against your own infrastructure-as-code standards. The reference architecture is published in thepoolsideai/reference_architectures repository, alongside the Terraform modules, example roots, and supporting documentation.

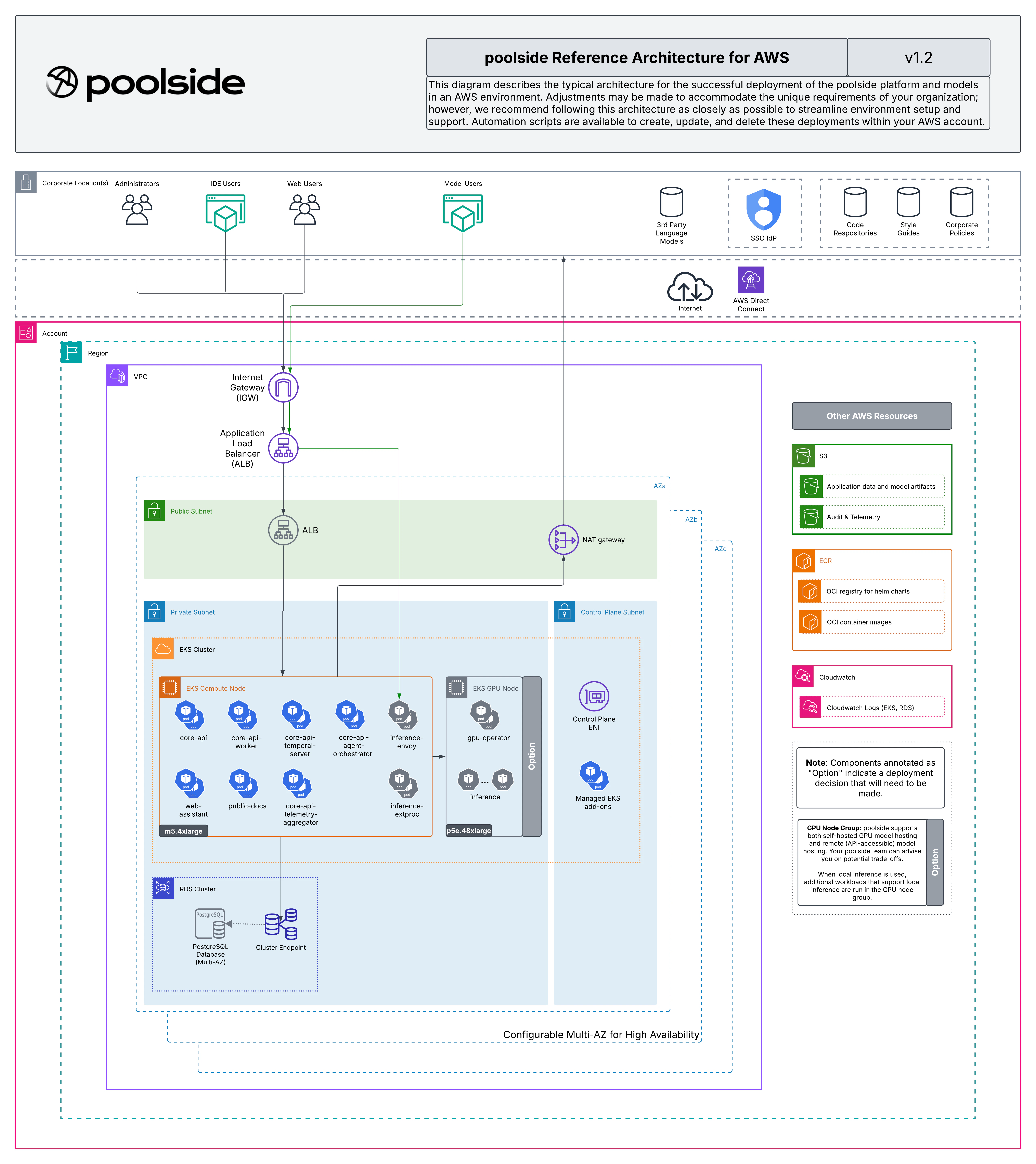

The diagram and architecture description on this page mirror the AWS reference architecture at version 1.2 (April 2026). For the source documents, Terraform modules, and example roots, see the reference_architectures repository.

Architecture

Network

A dedicated VPC with three subnet tiers across multiple availability zones:- Public subnets: NAT gateways and the internet-facing Application Load Balancer

- Private worker subnets: EKS worker nodes (CPU and GPU) with outbound internet through the NAT gateways

- Private control plane subnets: EKS control plane network interfaces, AWS-managed

EKS cluster

A managed Kubernetes cluster with:- An OIDC provider for IAM roles for service accounts (IRSA)

- Managed EKS add-ons:

vpc-cni,kube-proxy,coredns,metrics-server,snapshot-controller, andaws-ebs-csi-driver - A public API endpoint protected by a mandatory CIDR allowlist, plus a private endpoint for in-VPC traffic

- Cluster admin access through EKS access entries

- Envelope encryption of Kubernetes Secrets with a customer-managed KMS key

Node groups

- A CPU node group that runs the Poolside platform services, the AWS Load Balancer Controller, the External Secrets Operator, and cluster add-ons.

- An optional GPU node group that runs model inference workloads through the NVIDIA GPU Operator. The reference architecture supports EC2 capacity reservations for guaranteed GPU instance availability.

Data stores

- Amazon RDS for PostgreSQL with the master password managed by AWS, stored in AWS Secrets Manager and never written to Terraform state. Multi-AZ by default, with Performance Insights and CloudWatch log exports enabled.

- Amazon S3 buckets for model artifacts, telemetry, and repositories, plus a separate access log bucket. All buckets use SSE-KMS encryption with public access blocked.

- Amazon ECR repositories, one per container image the Helm chart needs, namespaced under the deployment name.

Security

- Customer-managed KMS keys for EKS Secret encryption, RDS storage, S3 objects, EBS volumes, and application-level encryption used by

core-api. - IAM roles with least-privilege policies for the node groups, the EKS cluster, and IRSA workloads (

core-api,inference, External Secrets Operator, AWS Load Balancer Controller, EBS CSI driver, and VPC CNI). - Optional permissions boundary support: a single boundary ARN threads through every IAM role for regulated environments.

Cluster prerequisites

Before Helm runs, the reference architecture creates the Kubernetes resources thepoolside-deployment and inference-stack charts expect:

- Namespaces:

poolsidefor the platform andpoolside-modelsfor inference - A

gp3StorageClassset as the cluster default, EBS-backed, KMS-encrypted, withWaitForFirstConsumerbinding - An optional custom CA bundle ConfigMap for environments with TLS-intercepting proxies or private PKI

- The AWS Load Balancer Controller, which creates ALBs from Kubernetes Ingress resources

- The External Secrets Operator, which syncs the RDS master password from AWS Secrets Manager into a Kubernetes Secret

- The NVIDIA GPU Operator, when the GPU node group is enabled. For required versions of the GPU Operator, driver, and container toolkit, see the Certified stack page for your release.

Key opinions

The reference architecture commits to the following decisions. They differentiate it from a generic Amazon EKS install. If you reproduce the architecture by hand, follow them to stay aligned with what Poolside support and the rest of this documentation expect.- Single

terraform apply: the reference architecture is intended to apply as one root module, not assembled piecemeal. - IRSA: AWS API access from pods uses IAM roles for service accounts.

- ALB ingress: traffic enters the cluster through the AWS Load Balancer Controller.

- Public EKS endpoint with CIDR allowlist: the API endpoint is publicly reachable but gated by

cluster_endpoint_public_access_cidrs. Private-only operation requires running Terraform from inside the VPC. - AWS-managed RDS master password: the password is managed by AWS and stored in AWS Secrets Manager. It is never written to Terraform state.

- Customer-managed KMS keys: applied to EKS Secrets, RDS, S3, EBS volumes, and application-level encryption.

- Minimum GPU instance type

p5e.48xlarge: required for the supported model performance profile.

Deployment profiles

The reference architecture ships two profiles:platform-only: the Poolside platform without local model inference. Use this when models are served from another deployment or from an external OpenAI-compatible model API.full: the platform plus the GPU node group and NVIDIA GPU Operator for local model serving. Recommended for production deployments that serve models in-cluster.

docs/profiles.md in the reference architecture repository.

Authentication

The reference architecture supports two authentication patterns:- External OIDC (default): you bring an existing OIDC-compliant identity provider. The Helm chart receives no OIDC values, and the web UI prompts for the OIDC client configuration on first login.

- Amazon Cognito (optional): set

enable_cognito = trueand Terraform creates a Cognito user pool, app client, and hosted domain. Use this when you want an AWS-native quickstart and don’t already have an external OIDC provider prepared.

docs/auth.md in the reference architecture repository.

Use the reference architecture

The named entry-point Terraform module isreference-stack. The example roots under aws/examples/ (full and platform-only) call this module with the appropriate inputs.

You can use the reference architecture in three ways:

- Apply it directly: clone the repository, configure the example for your environment, and run

terraform apply. - Fork it: take the example as a starting point and adapt the inputs, modules, or wrapper to your standards.

- Reproduce it by hand: use the architecture and the opinions on this page as a specification, and build the equivalent foundation in your own infrastructure-as-code.

Related resources

Poolside docs:- Install the Poolside platform on Amazon EKS

- Model inference on Amazon EKS

- AWS cost modeling

- Cloud deployment overview

poolsideai/reference_architectures):

- Architecture overview: What Terraform creates, layer by layer.

- Prerequisites: Required tools, AWS permissions, and bundle layout.

- Deployment guide: End-to-end deployment, including the staged-rollout pattern.

- Customizing: Supported overrides for sizing, auth, GPU configuration, and regulated environments.

- Model checkpoints: How model weights get from disk to S3 to GPU pods.

- Authentication: External OIDC compared with Cognito.

- Advanced: Permissions boundaries, custom CA bundles, AMI overrides, log aggregation.

- Compatibility: Supported Terraform and OpenTofu versions.